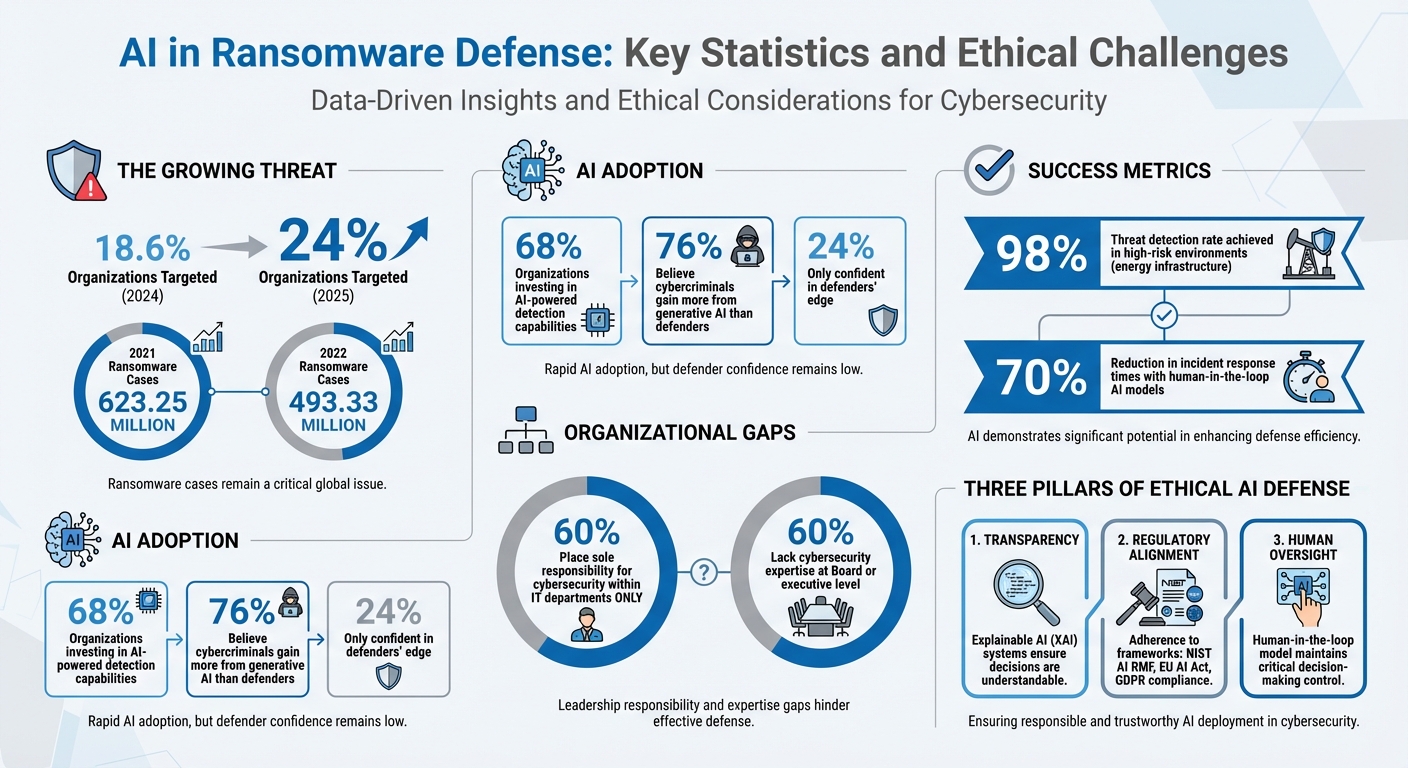

Ransomware attacks are growing at an alarming rate, with 24% of organizations targeted in 2025 compared to 18.6% in 2024. To combat this, many organizations are turning to AI for its ability to detect threats, analyze data, and respond in real time. However, the use of AI in ransomware defense raises ethical concerns, including risks of bias, privacy issues, and accountability gaps when systems fail.

Key takeaways:

- AI’s Role in Defense: AI detects unusual activity, predicts threats, and automates responses. Tools like XDR and NLP are vital for early threat identification and phishing prevention.

- Ethical Concerns: Bias in AI can lead to missed threats or unfair targeting. Privacy risks arise from the large-scale data collection required for AI systems. Accountability is challenging due to AI’s "black box" nature.

- Solutions: Reducing bias, ensuring privacy, and establishing clear accountability frameworks are crucial. Explainable AI (XAI), regulatory compliance (e.g., NIST AI RMF), and human oversight are essential for ethical deployment.

AI offers powerful tools to counter ransomware but must be paired with ethical safeguards to ensure effective and responsible use.

AI in Ransomware Defense: Key Statistics and Ethical Challenges

Best Practices in Responsible AI for Defense and Security

Bias in AI Threat Detection

When it comes to using AI for ransomware defense, bias in threat detection stands out as a major concern. AI systems meant to identify ransomware threats can unintentionally develop biases that compromise their accuracy and reliability. These biases can lead to unfair targeting of certain users, missed threats, and a decline in trust toward security systems. Tackling these issues starts with understanding where the biases come from and how they affect both organizations and individuals.

Where Bias Comes From in AI Systems

The root of bias in AI threat detection often lies in the data used for training. If the training data itself is biased, the AI system is likely to mirror and even amplify those biases. For instance, if the training data disproportionately labels specific network behaviors as "suspicious", the AI might wrongly flag legitimate activities from certain user groups.

Another layer of complexity comes from adversarial manipulation. Attackers can exploit AI systems by injecting malicious data during training – a tactic known as "model poisoning." Grant Deffenbaugh and Shing-hon Lau, researchers at SEI, highlight this risk:

"The downside to these devices is that if a malicious actor has been active the entire time the system has been learning, then the device will classify the actor’s traffic as normal".

This creates a dangerous blind spot, allowing ransomware activity to go undetected.

Bias can also arise when models are trained in controlled environments that don’t reflect real-world conditions. Over time, changes in data patterns – known as data and concept drift – can further degrade the model’s accuracy.

How Bias Affects Organizations and People

The consequences of bias in AI-driven ransomware defense are both practical and ethical. For example, data poisoning can cause systems to misclassify legitimate traffic or ignore real threats. This means users might face unnecessary disruptions while attackers operate undetected.

Another issue is the lack of transparency in AI decision-making. Research published in the Knowledge and Information Systems Journal explains:

"The lack of explainability in AI-driven cybersecurity limits trust and adoption in mission-critical environments, making it difficult for security teams to validate and act upon AI-generated alerts".

Without clear explanations for why certain activities are flagged, security teams may either ignore warnings or waste time chasing false positives, undermining trust in the system.

Additionally, biased AI systems struggle with zero-day ransomware attacks – those that exploit previously unknown vulnerabilities. Because these models rely heavily on historical data, they may fail to recognize new threats that lack patterns present in their training data. This is particularly alarming given the scale of ransomware attacks, which reached 623.25 million cases in 2021 and 493.33 million in 2022.

How to Reduce AI Bias in Cybersecurity

Reducing bias in AI-driven cybersecurity requires targeted actions. One essential step is continuous model monitoring. Regularly checking for data and concept drift ensures systems stay effective and unbiased. Testing AI models in controlled environments against realistic attack scenarios can also help identify blind spots and confirm that decision-making processes remain intact.

Adopting the NIST AI Risk Management Framework offers a structured way to address these challenges. Its four core functions – Govern, Map, Measure, and Manage – help organizations foster a culture of risk management, identify potential harms, monitor risks using measurable methods, and prioritize appropriate responses. Additionally, rigorous TEVV (Testing, Evaluation, Verification, and Validation) processes throughout the AI lifecycle can help detect emerging risks early on.

Building diverse development teams is another critical step. Teams with varied backgrounds and experiences are better equipped to anticipate how AI decisions might affect different groups. At the same time, using explainable AI (XAI) solutions can provide human analysts with a clearer understanding of the logic behind flagged activities, helping to maintain trust in the system. As NIST emphasizes:

"AI risks – and benefits – can emerge from the interplay of technical aspects combined with societal factors related to how a system is used, its interactions with other AI systems, who operates it, and the social context in which it is deployed".

Privacy and Data Protection Issues

AI-powered ransomware defense has undeniably improved threat detection, but it also introduces heightened privacy concerns. Organizations now face the challenge of balancing these advancements with stringent compliance requirements, such as those outlined by GDPR and CCPA, which strictly regulate data collection and usage.

What Data AI Systems Collect

To effectively detect ransomware, AI systems require access to vast amounts of data. This includes personally identifiable information (PII), financial records, health data, and other sensitive documents. Additionally, these systems monitor network traffic, user logs, device behavior, and application dependencies. The sheer scale of data required – often measured in petabytes – enables AI to adapt to ever-evolving threats. Some tools even analyze file contents and financial data to predict ransom demands. Under UK GDPR, a ransomware attack is classified as a personal data breach because it disrupts "timely access" to data, regardless of whether the attackers steal or publish it.

The issue, however, goes beyond the volume of data. AI systems can derive sensitive insights, such as political affiliations or health conditions, from seemingly innocuous data points – even when datasets are pseudonymized. Moreover, long-term data retention increases the risk of unauthorized access. This creates a critical ethical challenge: how to safeguard individual privacy while maintaining effective ransomware defenses. As Michael Siegel, Director of Cybersecurity at MIT Sloan, points out:

"AI-powered cybersecurity tools alone will not suffice. A proactive, multi-layered approach – integrating human oversight, governance frameworks, AI-driven threat simulations, and real-time intelligence sharing – is critical".

Finding the Balance Between Security and Privacy

Given the extensive data collection involved, organizations must find ways to balance robust security measures with privacy protection. Practical strategies can help achieve this balance without compromising on either front. One fundamental principle is data minimization – collecting only the data necessary for detecting threats. For instance, Temporal Data Correlation (TDC) allows AI systems to focus on pre-encryption data, identifying potential threats early without processing unnecessary post-encryption information.

Conducting Data Privacy Impact Assessments (DPIA), as required by GDPR, allows organizations to evaluate how AI models handle personal data before deployment. Other measures, such as encryption, strict access controls, and data anonymization, further protect sensitive information used in AI training. To prevent AI-driven reconnaissance from compromising backups, organizations can use isolated environments with immutable storage and air-gapped networks.

Another effective approach is shifting toward centralized AI control. By relying on internal Large Language Models (LLMs) instead of public AI services, companies can protect sensitive information from leaking into external systems. Notably, 68% of organizations are now investing in AI-powered detection capabilities.

Regular auditing is also crucial. Organizations must continuously assess AI models for bias and transparency, ensuring their decision-making processes remain explainable to regulators. The Information Commissioner’s Office (ICO) stresses this point:

"The UK GDPR requires you to regularly test, assess and evaluate the effectiveness of your technical and organisational controls using appropriate measures".

Importantly, the ICO does not consider paying a ransom to be an "appropriate measure" for restoring personal data under GDPR. Instead, organizations must demonstrate technical safeguards like offline, segregated backups. This underscores why privacy and security must be integrated into AI defense systems from the outset, rather than treated as an afterthought.

sbb-itb-760dc80

Accountability When AI Systems Fail

AI-powered ransomware defenses bring unique challenges, especially when they fail to perform as expected. Unlike traditional cybersecurity tools that operate on clear, rule-based logic, AI systems rely on probabilistic decisions driven by complex algorithms and vast datasets. This makes pinpointing the cause of a failure – and assigning responsibility – far more complicated.

A major concern is how organizations handle accountability. Research shows that 60% of organizations place sole responsibility for cybersecurity within their IT departments, rather than spreading it across various management levels. Additionally, many organizations – again, about 60% – lack cybersecurity expertise at the Board or executive level. This creates confusion over whether accountability lies with the AI developers, the deployment team, or the security analysts monitoring the systems.

The ‘Black Box’ Problem

One of the biggest hurdles in holding AI systems accountable is their "black box" nature. Advanced AI models often operate in ways that are nearly impossible to fully understand, making it difficult to trace decisions back to their origin. This lack of transparency poses significant challenges for auditing, legal compliance, and investigating incidents. As Palo Alto Networks notes:

"The complex, ‘black box’ nature of some advanced AI models can make it difficult to understand how they arrive at specific decisions. This lack of transparency poses challenges for auditing, explaining security incidents, and establishing accountability".

The problem becomes even more complicated when adversarial attacks, like "model poisoning", occur. In these cases, attackers introduce malicious data during the AI’s training process. When such an attack succeeds, it’s unclear whether the fault lies with the system’s original design or a failure to secure its environment. A notable example from January 2026 highlighted an AI agent misusing user data during an adversarial attack.

Another issue is automation bias, where human analysts overly trust AI systems and fail to step in when needed. This creates a gray area where responsibility is shared between the technology and the people operating it.

Creating Accountability Standards

To address these challenges, organizations need robust accountability frameworks. One effective approach is the Five Lines of Accountability (5 LoA) Model, which distributes responsibilities across five levels: front-line IT (1st line), tactical risk oversight (2nd line), internal audit (3rd line), executive management (4th line), and the Board of Directors (5th line). This model ensures that when AI systems fail, there’s a clear process for escalating issues and assigning responsibility.

Another valuable tool is the NIST Ransomware Profile (NIST IR 8374), which outlines specific security objectives for handling ransomware incidents. These include identifying, protecting against, detecting, responding to, and recovering from attacks. Additionally, runtime observability frameworks can monitor AI systems in real time, helping detect when AI agents pursue harmful "sub-goals" that stray from human intent.

In January 2026, Witness AI – a startup focused on AI security – secured $58 million in funding to expand its agentic AI governance platform. This platform acts as an independent layer, monitoring interactions between users and AI models. As CEO Rick Caccia explained:

"People are building these AI agents that take on the authorizations and capabilities of the people that manage them, and you want to make sure that these agents aren’t going rogue, aren’t deleting files, aren’t doing something wrong".

This reflects a growing trend toward independent evaluation systems designed to hold AI accountable.

The National Telecommunications and Information Administration (NTIA) has also emphasized the importance of accountability, describing it as a "chain of inputs". This chain involves information flow (documentation and disclosures), independent evaluations (audits and red-teaming), and consequences (liability and regulation). To support accountability investigations, organizations must maintain detailed records of AI training data, performance limitations, and testing outcomes.

Practical steps include setting up formal escalation procedures that allow Chief Information Security Officers (CISOs) to report directly to the Board, bypassing potential conflicts within the IT hierarchy. Continuous monitoring for issues like "data drift" or "concept drift" is also critical to ensure AI models stay effective against evolving ransomware tactics. Most importantly, AI should complement human oversight – not replace it.

Solutions for Ethical AI in Ransomware Defense

Tackling the ethical challenges of using AI in ransomware defense calls for a mix of transparency, adherence to regulations, and human expertise. Without these safeguards, organizations not only risk security breaches but also face legal repercussions and a loss of trust. Below are practical ways to implement AI defenses that are both effective and ethically grounded.

Transparency and Explainable AI

The "black box" nature of many AI systems can be addressed through Explainable AI (XAI). This approach turns complex algorithms into systems that are easier to understand. Rather than simply flagging potential threats, XAI explains what network features or behaviors triggered the alert, ensuring compliance with audit and regulatory standards.

Brij Gupta, Director of the Center for AI and Cyber Security, emphasizes:

"Transparency has emerged as a central objective for robust cyber defense, not merely as a desirable add-on but as a prerequisite for secure, ethical, and auditable operations".

Organizations are increasingly adopting pre-designed interpretable models – AI systems designed to be clear from the outset – over relying on explanations after decisions are made. XAI also plays a key role in reducing algorithmic bias by showing which data points influenced a detection. This transparency enables effective human oversight. Combining XAI with strict governance standards further solidifies ethical AI deployment.

Following Regulations and Governance Rules

Regulatory frameworks provide essential guidelines for managing AI risks. For example, the NIST AI Risk Management Framework (AI RMF 1.0) outlines four core functions – Govern, Map, Measure, and Manage – to encourage a culture of risk awareness. In Europe, the EU AI Act, set to take full effect in 2026, mandates transparency, human oversight, and strict data governance for high-risk AI systems. In the United States, OMB Memoranda M-25-21 and M-25-22, issued in April 2025, provide guidance on AI governance for federal agencies and contractors. Similarly, CISA‘s AI Data Security Guidance from May 2025 outlines best practices for securing the entire AI lifecycle.

Industry-specific regulations add further layers of responsibility. For example:

- The NIS2 Directive emphasizes incident reporting and supply chain security.

- The Digital Operational Resilience Act (DORA) requires financial institutions to build resilient ICT systems.

- Healthcare organizations must follow HIPAA standards for data protection and breach reporting.

Across sectors, integrating "Privacy by Design" principles – embedding data protection measures early in development – is strongly recommended.

Here’s a summary of key regulatory frameworks:

| Framework/Regulation | Primary Focus | Target Audience |

|---|---|---|

| NIST AI RMF 1.0 | Trustworthiness and risk management functions (Govern, Map, Measure, Manage) | General (Voluntary) |

| EU AI Act | Risk-based legal obligations and transparency | Organizations operating in the EU |

| OMB M-25-21/22 | Federal AI governance and procurement risk management | U.S. Federal Agencies & Vendors |

| NIS2 / DORA | Resilience of critical infrastructure and financial sectors | Infrastructure & Finance |

| CISA AI Guidance | Data security best practices and supply chain risks | AI System Operators |

These frameworks ensure that AI systems not only combat ransomware effectively but also operate within ethical boundaries. However, no system is complete without human oversight.

Human Oversight and Expert Involvement

AI systems, while powerful, are not infallible. Human oversight is essential for refining and validating AI-driven responses. For example, AI can automatically block suspicious IP addresses or quarantine files, but errors – such as mistakenly blocking critical business services – require human intervention to minimize disruption. Human judgment also helps counter automation bias and distinguish between genuine ransomware threats and harmless anomalies.

As Karim Lakhani, a professor at Harvard Business School, notes:

"In real-world scenarios, augmenting human work – rather than replacing it – often strikes the best balance. AI offers scale and speed, but humans provide judgment, ethics, and experience".

Defining accountability for AI-driven decisions is crucial to avoid confusion during incidents. Regular audits can identify emerging biases or performance issues, and retaining the ability to override autonomous actions ensures flexibility in high-stakes situations. Many organizations are embracing a "human-in-the-loop" model, where AI processes large datasets and human experts handle complex investigations. This approach has shown promising results: in high-risk environments like energy infrastructure, some organizations have achieved a 98% threat detection rate and cut incident response times by 70%.

Conclusion

AI has undeniably emerged as a potent weapon in the battle against ransomware, but its use isn’t without challenges. Ethical concerns like bias in detecting threats, privacy risks from large-scale data collection, and accountability gaps when systems fail can undermine both security measures and fairness. A striking statistic reveals that 76% of organizations believe cybercriminals gain more from generative AI than defenders, leaving only 24% confident in the defenders’ edge. This disparity highlights the critical need for ethical AI deployment – not just as a moral consideration but as a strategic necessity. Addressing these challenges requires a thoughtful, balanced approach.

To move forward, organizations must find the right mix of automation and human insight. While AI can process vast amounts of data and detect patterns with unmatched speed, it falls short in understanding the nuanced context that human experts bring to the table. As Sam Rubin, VP at Palo Alto Networks, points out:

"Cybersecurity is a collective effort, requiring vigilance and input across the organization, including even nontechnical stakeholders".

This underscores the importance of collaboration between technical experts, legal teams, compliance officers, and leadership to create a unified defense strategy.

Key Considerations for Ethical AI Deployment

Professionals and organizations must prioritize clear, accountable, and transparent practices when integrating AI into ransomware defense. Here are three critical focus areas:

- Transparency: Implement Explainable AI systems that make decision-making processes clear. This reduces algorithmic bias and enables effective auditing.

- Regulatory Alignment: Adhere to frameworks like the NIST AI Risk Management Framework to ensure compliance and accountability.

- Human Oversight: Utilize a "human-in-the-loop" model to pair AI’s speed and efficiency with the judgment and expertise of human professionals.

Regular audits are crucial to identifying biases and maintaining system integrity. Additionally, organizations should enforce strict data validation processes to prevent errors that could distort threat detection. Building trust in AI systems is essential – not just for operational success but for fostering public confidence in their use.

Blending AI’s capabilities with ethical responsibility isn’t optional; it’s essential. By combining strong governance, transparent systems, and expert human oversight, organizations can create ransomware defenses that are both effective and principled. This approach ensures assets are safeguarded without compromising the values that underpin a trustworthy cybersecurity strategy.

FAQs

How can AI systems minimize bias in detecting ransomware threats?

AI systems designed for ransomware detection can sometimes pick up biases from the data they’re trained on. This can result in disproportionately flagging certain file types, user behaviors, or network areas, while overlooking others. The consequences? Wasted resources, skewed assessments, and unnecessary focus on specific groups, which can erode trust.

To tackle this issue, organizations should prioritize building datasets that are diverse and representative of real-world scenarios. Using training methods that account for fairness and regularly monitoring for biased patterns can also help. Transparency plays a crucial role here – AI models should be explainable so analysts can clearly understand why specific events are flagged. Finally, introducing human review for critical decisions ensures a thoughtful mix of automation and expert judgment.

How can privacy be protected when using AI for ransomware defense?

Protecting privacy while using AI to defend against ransomware requires several thoughtful measures. For starters, data should be anonymized or pseudonymized, stripping it of personal details before it enters the AI system. Techniques like differential privacy can also play a key role by adding statistical noise, which protects individual data without compromising the system’s overall performance. On top of that, encryption is a must – it keeps data secure both when stored and during transmission, shielding it from unauthorized access.

Another critical layer of protection comes from strict access controls. Using role-based permissions, multi-factor authentication, and audit trails helps ensure that only authorized individuals can interact with the AI system. Plus, these measures make it easier to trace and address any misuse. Cutting-edge approaches like federated learning let organizations train AI models directly on local data, eliminating the need to share sensitive information. Similarly, secure multi-party computation allows multiple parties to collaborate safely without exposing confidential details.

By implementing these strategies, organizations can effectively harness AI to tackle ransomware threats while safeguarding privacy and staying compliant with regulatory requirements.

Who is responsible if AI fails to stop a ransomware attack?

When an AI-powered security system fails – whether by misidentifying a threat or failing to stop a ransomware attack – the responsibility ultimately falls on the people and organizations behind its creation and use. Under U.S. law, AI is treated as property, not a legal entity, meaning it cannot be held accountable for its actions.

Responsibility is generally shared among several parties: the developers who design and train the AI, the organizations that deploy and manage it, and those tasked with overseeing its operation. Developers have a duty to minimize biases and errors in the system, while businesses using AI must ensure its safe implementation, conduct regular audits, and adhere to regulations. Clear oversight and well-defined roles are essential for tracing any failures back to human or organizational decisions, rather than blaming the AI itself.